What caused a mere 1.6MB GIF image to swell my Discourse backup to 377GB?

A case has been reported where a site backup on Discourse , an open-source internet forum system, was significantly enlarged by a single GIF image.

How Jennifer Aniston and Friends Cost Us 377GB (and Broke EXT4 Hardlinks) - Discourse

https://blog.discourse.org/2026/04/how-jennifer-aniston-and-friends-cost-us-377gb-and-broke-ext4-hardlinks/

Jake Goldsborough, a developer at Discourse, was investigating a problem where a site with hundreds of gigabytes of uploaded data would run out of disk space during backup creation, causing the process to freeze. In the process, he discovered that even though the system appeared to have hundreds of gigabytes of uploaded files, there were actually a large number of duplicate files with the same content.

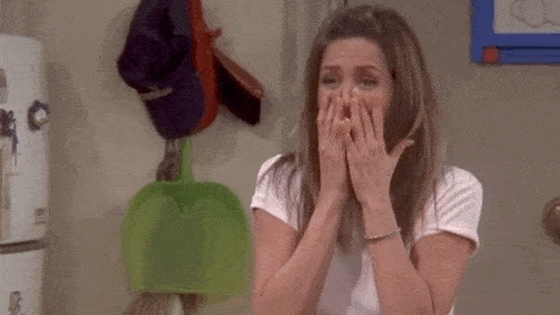

The main reason for the large file size was a GIF image of Rachel Cullen Greene from the TV show ' Friends ' happily jumping for joy.

The file size of this GIF image is about 1.6MB, which isn't particularly large for a single image, but the fact that the same GIF image was saved as a separate file 246,173 times resulted in a bloat of 377GB in the backup. According to Goldsborough, this GIF image was repeatedly used in various places on the site, such as posts and private messages.

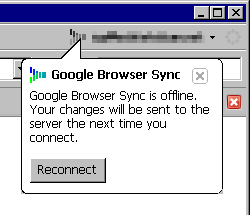

The reason this GIF image was saved as a separate file was due to Discourse's 'secure uploads' feature. Secure uploads is a feature that allows you to safely handle images and files by separating them according to their visibility. For example, if you use an image that you used in a private message in a public post, it will be treated as a separate file even though it's the same image.

Therefore, if popular GIF images or other images are used repeatedly in posts, private messages, or reposted in different categories, the number of separate files with the same content will increase each time. Goldsborough explains, 'While this duplication is not very noticeable when you are using the site normally, it becomes a big problem when backing up.'

In fact, one customer's website had a total of 432GB of uploaded files, but upon examination, it turned out that the actual usable data after removing duplicates was only 26GB.

To address the issue of duplicate files causing backup failures, Discourse has introduced a process for handling files with the same content in groups. This method uses SHA-1 , an identifier used to determine if files have the same content, to group files with identical content together. Within each group, only the first file is downloaded, and the remaining files are processed using ' hard links ' that point to the same data from different filenames.

This method allows you to create a backup archive while maintaining the hard link relationship, so ideally, you should be able to download only 26GB and create a 26GB backup at the same time.

However, problems arose in the actual operating environment. When they started backing up a large site, the process of replacing files with hard links worked fine up to around 64,000 files, but it began to fail to create hard links when it reached around 65,000 files. The cause was a limitation of the ' ext4 ' file system, which is widely used in Linux, and it was limited to approximately 65,000 hard links that could be created for a single actual file.

The backup itself was completed successfully through a fallback process that switched to an alternative method after it became impossible to create hard links. However, instead of downloading all 246,173 duplicate files in a single download as intended, approximately 181,000 fallback downloads were actually performed in addition to the initial download. Goldsborough recalls that while this was better than downloading all 246,173 files separately, it was not the result they had hoped for.

Discourse then made further modifications. Specifically, it now creates hard links as before, and when the number of hard links reaches the limit and an error occurs, it copies the original file to the user's local machine and uses that copy as the new source file from then on. With this method, there is no need to pre-determine 'how many hard links can be created on this file system,' as it only needs to switch to a new copy when the limit is actually reached. 'It can be used on any file system and requires no special additional configuration,' Goldsborough explains.

Goldsborough stated that the limit on the number of hard links in ext4 is not merely an arbitrary restriction, but serves to prevent specific bugs and attacks. He then pointed out that in production environments, problems that were not noticed beforehand may be discovered, and even if the overall system works fine, other problems may arise in extreme cases such as processing being concentrated on a single GIF. Therefore, it is necessary to anticipate such exceptions.

Related Posts:

in Software, Web Service, Posted by log1b_ok