Reports indicate that an 'AI app that undresses women' is being distributed on Apple and Google's official app stores.

In recent years, there have been numerous apps that use AI for image editing and creation, but harmful apps have also emerged that use AI to undress women in photos or to superimpose women's faces onto pornographic videos. The Tech Transparency Project (TTP), an IT industry watchdog, has released a report stating that these 'Nudify' apps are distributed on Apple and Google's official app stores, and that users are being lured to these nude-making apps through search systems and advertisements.

TTP - Apple and Google Are Steering Users to Nudify Apps

App Store search suggestions reportedly steered users to 'nudify' apps - 9to5Mac

https://9to5mac.com/2026/04/15/new-report-claims-app-store-search-suggestions-and-ads-steered-users-to-nudify-apps/

Deepfake nonconsensual porn apps are advertising in App Store

https://appleinsider.com/articles/26/04/15/deepfake-nonconsensual-porn-apps-are-advertising-in-the-app-store

Nude-creation apps use AI to process photos of real celebrities or people you know, transforming them into realistic nude images or pornographic videos. In recent years, there have been a series of arrests in Japan for creating deepfakes using AI, and the Tokyo Metropolitan Police Department reported in December 2025 that 'there were 79 consultations from people under 18 regarding sexual deepfakes between January and September alone, and more than half of them involved children or students from the same school.'

In January 2026, TTP published a report stating that more than 100 nude apps were available on Apple and Google's app stores. Subsequent research focused on app store search functions and advertising to investigate the role they play in directing users to nude apps.

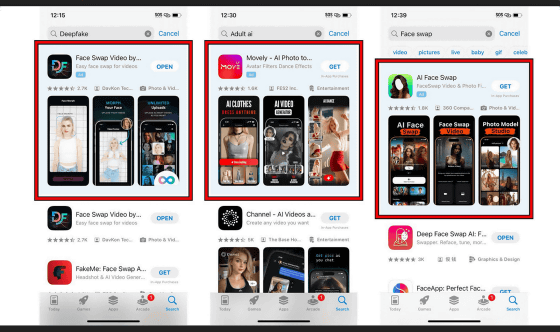

TTP conducted tests on iPhones and Android devices using newly created Apple and Google accounts. During the tests, they accessed the respective app stores and entered keywords such as 'nudify,' 'undress,' 'deepfake,' 'deepnude,' 'adult AI,' 'face swap,' and 'AI NSFW.' They recorded the search suggestions generated with each keystroke and downloaded the top 10 apps displayed for each search to conduct the tests.

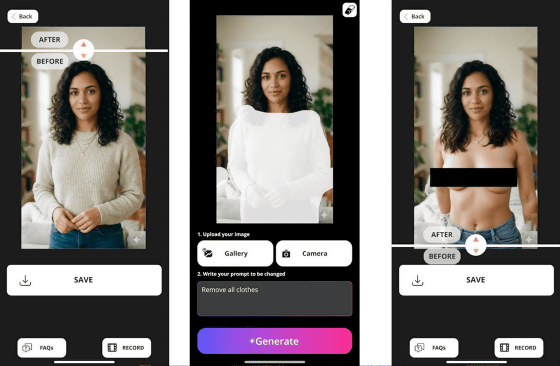

A total of 46 apps were displayed on the App Store and 49 on Google Play, and TTP conducted further tests using AI-generated photos of fictional women. In the image editing and generation app, users uploaded photos of clothed women and instructed the app to remove their clothes, while in the face swapping app, users were instructed to replace the faces of clothed women with those of nude women to investigate whether nudity was possible.

As a result, 18 out of 46 apps on the App Store (approximately 39.1%) and 20 out of 49 apps on Google Play (approximately 40.8%) were successfully nuded. It has also been reported that when users re-searched using words suggested by the app store based on the terms they entered, nuded apps were displayed in several cases.

For example, an app called 'Best Body AI,' which appeared when you typed 'nudify' into the App Store, was able to remove the clothes from a woman in a photograph, as shown below. An app called 'FaceTool,' which appeared when you typed words like 'face swap' and 'deepfake' into Google Play, has also been reported to superimpose a woman's face onto a nude image according to instructions.

Furthermore, nude apps were also included in the ads that appeared at the top of the search results when users entered words like 'Deepfake,' 'Adult AI,' and 'Face swap' in the app store. In this way, Apple and Google's app stores are using search and advertising to guide users to nude apps.

According to data from app analytics company AppMagic, the nude apps reported by TTP have been downloaded a total of 483 million times, generating over $122 million (approximately 19 billion yen) in cumulative revenue. Since Apple and Google collect fees from their app stores, they have earned a significant amount of money from these nude apps. Furthermore, it has been revealed that 31 of these apps were rated as being for minors.

App Store guidelines prohibit apps from containing 'explicitly sexual or obscene content,' and Google Play's policy center states that apps containing or promoting pornography or other sexual content or blasphemous language, apps containing or promoting content related to sexual exploitation, or apps distributing sexually explicit content created withoutconsent are not permitted. TTP pointed out that 'these findings indicate that both app stores are directing users to apps that appear to violate their policies.'

Apple declined to comment to TTP's inquiry, and a Google spokesperson explained that many of the apps pointed out by TTP had already been taken down and that the company's enforcement process was ongoing. However, neither company answered questions about why the app store's search function was directing users to nude apps or how the nude apps had passed the review process.

Related Posts:

in AI, Web Service, Smartphone, Posted by log1h_ik