Google's AI model for robots, 'Gemini Robotics ER 1.6,' has been released.

Google has announced Gemini Robotics ER 1.6 , an AI model for robots with enhanced visual and spatial awareness capabilities. The model is designed to infer its surroundings by calling Google Search and other functions.

Gemini Robotics ER 1.6: Enhanced Embodied Reasoning — Google DeepMind

We're rolling out an upgrade designed to help robots reason about the physical world. 🤖

— Google DeepMind (@GoogleDeepMind) April 14, 2026

Gemini Robotics-ER 1.6 has significantly better visual and spatial understanding in order to plan and complete more useful tasks. Here's why this is important 🧵 pic.twitter.com/rxT1lkYZZB

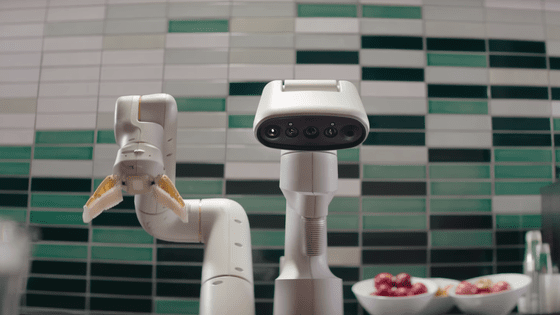

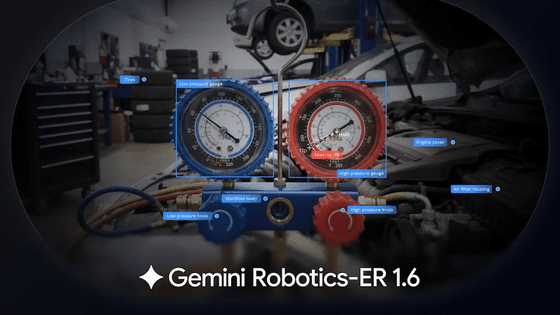

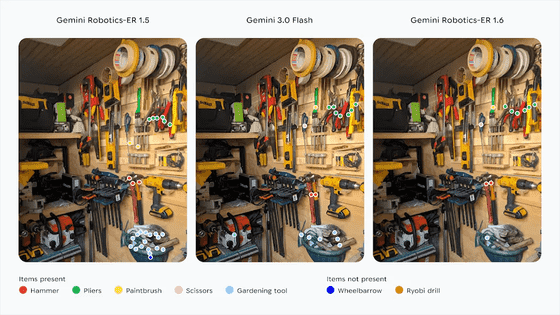

Gemini Robotics-ER 1.6 features enhanced spatial and physical reasoning capabilities compared to previous models. Its object detection capabilities have been improved, allowing it to count objects, identify the fewest visible objects, and respond to complex prompts such as 'Show me all the objects small enough to fit in this cup.'

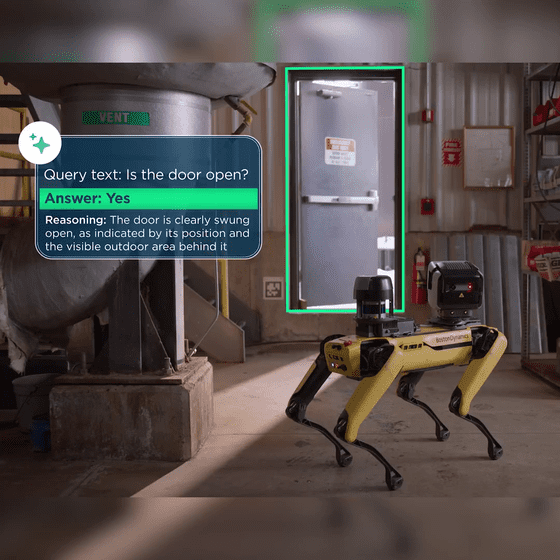

It can perceive space and make inferences such as 'Is the door open?'. It can perform tasks by natively calling tools like Google Search to retrieve information, or by utilizing visual, linguistic, and behavioral models, as well as other third-party user-defined functions.

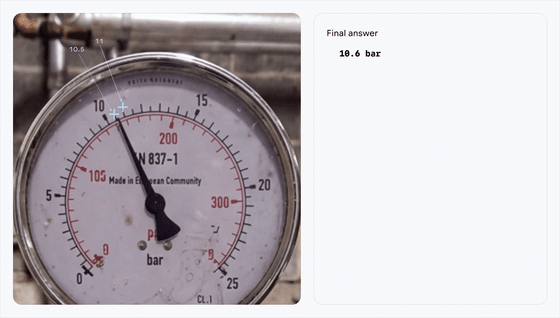

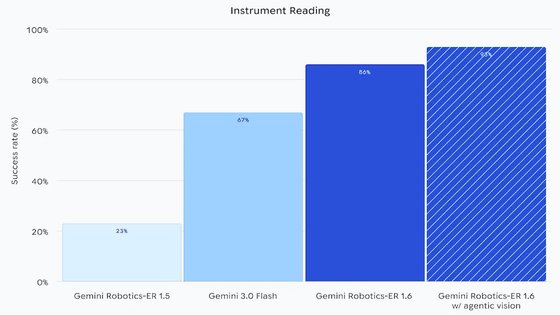

As a new capability, it can now also read analog instruments.

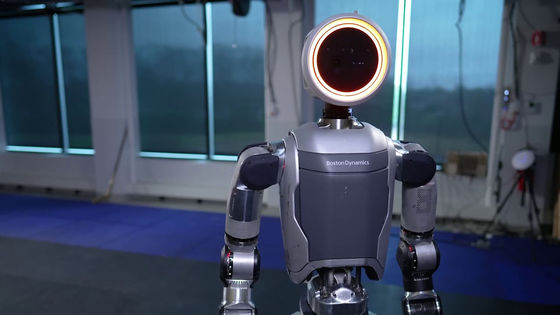

Gemini Robotics-ER 1.6 reads analog instruments using visual inference. In particular, it demonstrates significantly superior instrument reading capabilities compared to its predecessor, Gemini Robotics-ER 1.5, by using 'Agentic Vision,' which magnifies images to estimate proportions and intervals and obtain accurate measurements. This capability was developed in response to the needs of Boston Dynamics, a company partnered with Google.

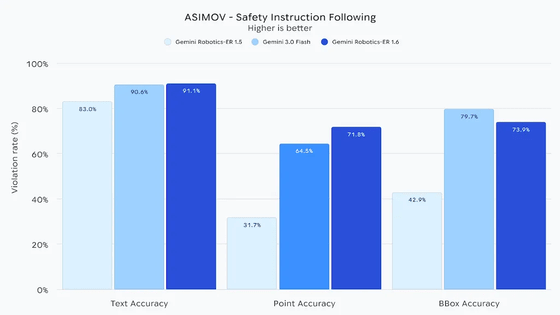

Furthermore, their ability to adhere to physical safety constraints has also improved significantly. For example, they now follow constraints such as 'do not handle liquids' and 'do not lift objects weighing more than 20 kg,' making safer decisions. They have also shown improvement in their ability to identify surrounding safety hazards.

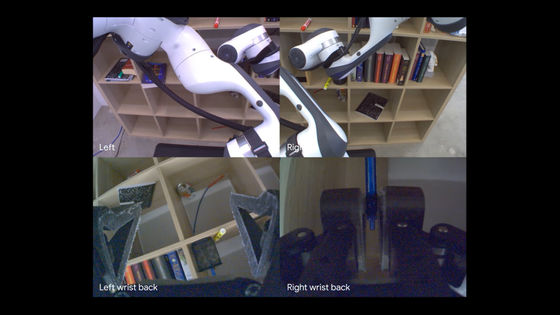

Furthermore, the multi-view inference function has been enhanced, allowing for a proper understanding of the relationships between images captured by multiple cameras.

Google stated, 'For robots to truly be useful in our daily lives and industries, they need to be able to reason about the physical world, not just follow instructions. From navigating complex facilities to reading pressure gauge needles, it is the sense-based reasoning ability of robots that will bridge the gap between the digital and the physical.'

Related Posts:

in AI, Posted by log1p_kr