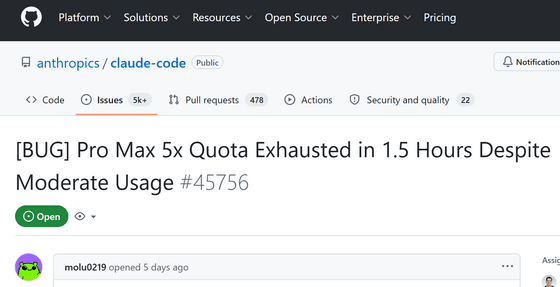

There are reports that Anthropic's Claude quickly reaches its usage limit with typical use, rendering it unusable.

molu0219, who subscribes to the paid plan of Anthropic's AI service 'Claude,' posted on GitHub that 'it quickly hits the usage limit and becomes unusable.'

[BUG] Pro Max 5x Quota Exhausted in 1.5 Hours Despite Moderate Usage · Issue #45756 · anthropics/claude-code

https://github.com/anthropics/claude-code/issues/45756

Claude imposes usage limits in 5-hour increments. If your usage reaches a certain limit within a 5-hour period, your service will be restricted until 5 hours have elapsed since you started using it.

The plan that molu0219 is subscribed to is the 'Max 5x' plan, which costs $100 per month (approximately 15,900 yen). The 'Max 5x' plan is characterized by having five times the usage limit compared to the standard 'Pro' plan, which costs $20 per month (approximately 3,180 yen).

Anthropic announces Max plan for Claude, offering 5 times the usage limit of Pro for $100/month and 20 times for $200/month - GIGAZINE

molu0219 stated that on the day the problem occurred, he was working intensely on development for five hours, from 3 PM to 8 PM. During those five hours, he made 2,715 API calls, the maximum context length reached approximately 970,000 tokens, and the automatic context summarization function was triggered twice. molu0219 understands that usage limits are imposed during such heavy usage.

The problem arose afterward. When molu0219 used Claude for typical purposes such as light development work and Q&A after 8 PM, he reached his usage limit in just an hour and a half.

molu0219 analyzed the cause and found that a Claude session that was left open in the background was performing a large number of cache reads. In cost calculations, input from the cache is processed at one-tenth the price of normal input, but when working backward from the usage limit, there is a suspicion that the usage count is being calculated at the full amount of cache input, not one-tenth.

With Claude Code, if you have a paid plan, you can enter up to 1 million tokens into the context window. The larger the context window, the more information can be processed at once, so Anthropic also highlighted this when they expanded the context window to 1 million tokens.

Claude Opus 4.6 and Claude Sonnet 4.6 now support input of 1 million tokens - GIGAZINE

molu0219 stated, 'If cache reads are calculated at full rate, then a wider context window actually increases the number of input tokens per API call, making it easier to reach usage limits.' He argued that the 1 million token context window, which was supposed to be a selling point, is actually causing problems for users.

Furthermore, he stated that 'a large number of idle sessions that are simply running in the background and not being actively used by the user should not be consuming the API.'

Boris from Claude Code has addressed the issue, posting solutions in the comments section such as 'narrowing the standard context window' and 'actively working on streamlining background tasks.'

Anthropic, the company behind Claude, is experiencing rapid growth, with sales more than tripling in just three months. While they have announced plans to increase their computing power, they are currently believed to be experiencing a shortage of computing resources.

AI demand explodes, but computing power becomes a constraint; OpenAI, which had made massive investments, is aiming for a comeback - GIGAZINE

Anthropic has announced that it will be tightening usage limits as a measure to address the load, and has received feedback such as ' Claude is clearly not working as well as it used to ' and ' Cache expiration has been shortened, leading to increased usage .'

Related Posts:

in AI, Web Service, Posted by log1d_ts