The results of 'MLPerf Inference v6.0,' which measures the inference and video generation performance of AI-dedicated servers, have been released, allowing for a quick comparison of NVIDIA and AMD's performance.

MLCommons, an industry consortium that evaluates the performance of AI servers, has released the results of its inference performance benchmark test, 'MLPerf Inference v6.0.' MLPerf Inference v6.0 is a benchmark test that measures processing speed when running large-scale language models and video generation models, and NVIDIA and AMD have already released benchmark results for their own servers.

MLCommons Releases New MLPerf Inference v6.0 Benchmark Results - MLCommons

NVIDIA Extreme Co-Design Delivers New MLPerf Inference Records | NVIDIA Technical Blog

https://developer.nvidia.com/blog/nvidia-extreme-co-design-delivers-new-mlperf-inference-records/

AMD Delivers Breakthrough MLPerf Inference 6.0 Results

https://www.amd.com/en/blogs/2026/amd-delivers-breakthrough-mlperf-inference-6-0-results.html

The tests in MLPerf Inference are updated to keep pace with advancements in AI. MLPerf Inference v6.0 introduced a test to check the execution speed of 'gpt-oss-120b' developed by OpenAI, as well as a video generation speed test using Alibaba's video generation AI 'Wan2.2-T2V-A14B-Diffusers'.

AI server providers such as Cisco and Oracle have already submitted their MLPerf Inference v6.0 test results to MLCommons, and NVIDIA and AMD have also submitted test results for servers equipped with their own AI chips. A summary page compiling the test results from each company has also been published.

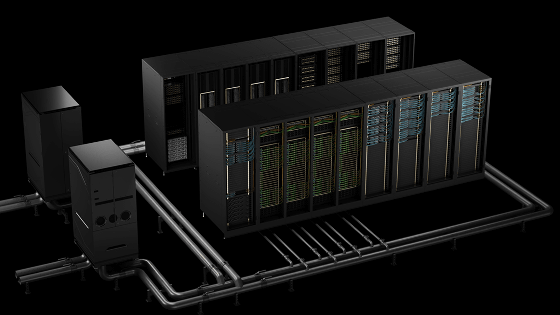

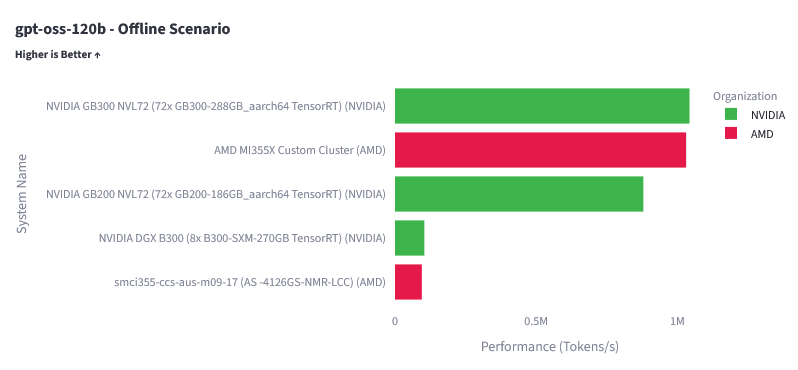

The following is a summary page displaying the execution speed test results for gpt-oss-120b submitted by AMD and NVIDIA. The top performer is the NVIDIA GB300 NVL72, and the AMD MI355X custom cluster also recorded a score very close to that of the NVIDIA GB300 NVL72.

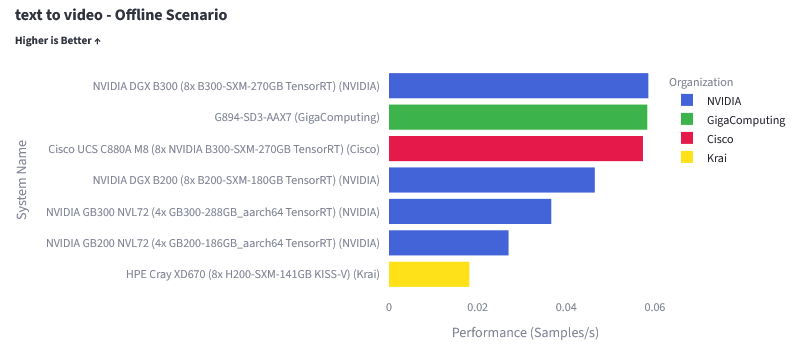

The results of the video generation speed test using Wan2.2-T2V-A14B-Diffusers are as follows. At the time of writing, only the results for servers equipped with NVIDIA AI chips were available.

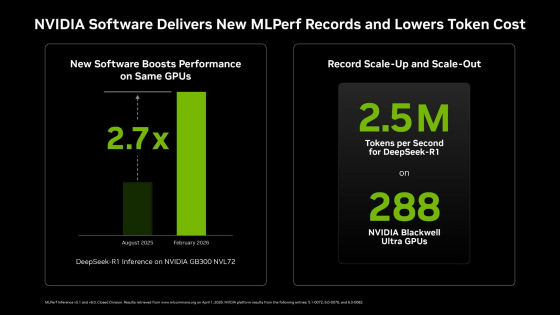

NVIDIA is promoting the fact that 'software optimizations have resulted in a 2.7-fold improvement in the processing performance of the NVIDIA GB300 NVL72 between August 2025 and February 2026.'

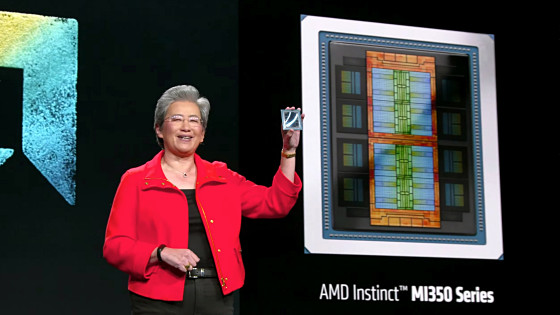

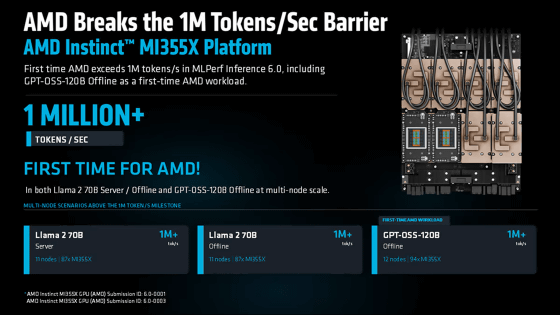

AMD is highlighting that the MI355X has achieved its first-ever processing speed of 1 million tokens per second.

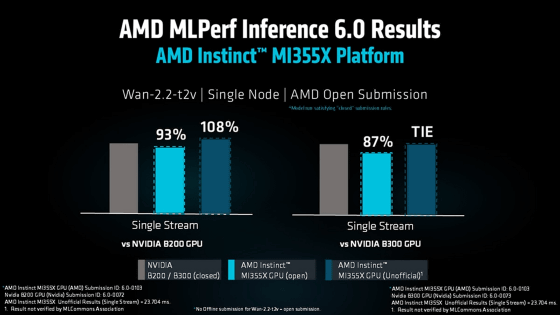

Furthermore, AMD has published the results of a video generation speed test using Wan2.2-T2V-A14B-Diffusers on its official website. According to AMD's announcement, the MI355X scored 93% of the B200, and subsequent optimizations increased the score to 108%.

Related Posts: