Anthropic sues US Department of Defense, OpenAI and Google employees support Anthropic

Anthropic, an AI development company, has filed a lawsuit against the U.S. Department of Defense (DoD) for designating the company as a supply chain risk. Approximately 40 employees from Google and OpenAI filed an amicus brief supporting Anthropic's claims. The signatories acknowledge that their companies are in fierce competition with Anthropic, but argue that the government's designation will have a negative impact on technological development and safety discussions across the industry.

Anthropic PBC v. US Department of War, 3:26-cv-01996 – CourtListener.com

Exhibit Amici Curiae Brief of Employees of OpenAI and Google in Their Personal C – #24, Att. #1 in Anthropic PBC v. US Department of War (ND Cal., 3:26-cv-01996) – CourtListener.com

https://www.courtlistener.com/docket/72379655/24/1/anthropic-pbc-v-us-department-of-war/

OpenAI and Google employees rush to Anthropic's defense in DOD lawsuit | TechCrunch

https://techcrunch.com/2026/03/09/openai-and-google-employees-rush-to-anthropics-defense-in-dod-lawsuit/

Employees across OpenAI and Google support Anthropic's lawsuit against the Pentagon | The Verge

https://www.theverge.com/ai-artificial-intelligence/891514/anthropic-pentagon-lawsuit-amicus-brief-openai-google

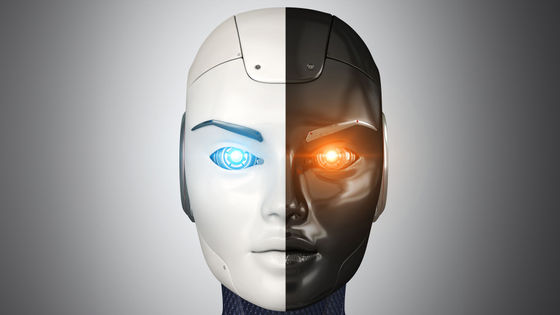

Anthropic had set a 'red line' prohibiting the use of its technology for mass surveillance in the U.S. or for fully autonomous weapons systems that could kill or injure without human intervention. The Department of Defense sought authority to use AI for any lawful purpose without restrictions from private companies, but the company refused , resulting in its blacklisting as a national security risk.

AI company Anthropic officially designated as a 'supply chain risk to US national security,' Anthropic vows legal battle - GIGAZINE

The supply chain risk designation, a highly unusual measure typically applied to companies associated with foreign adversaries, would not only cost Anthropic military contracts but also force other companies using its model, Claude, to replace their systems in order to continue doing business with the Department of Defense.

The Department of Defense signed a new contract with OpenAI almost immediately after imposing sanctions on Anthropic, but this contract has sparked strong opposition from within OpenAI.

OpenAI agrees to AI contract with Department of Defense, shortly after Anthropic negotiations collapse - GIGAZINE

Anthropic said it believes the supply chain risk designation has no legal justification and believes it has no choice but to challenge it in court, and has filed a lawsuit against the U.S. Department of Defense seeking to have the designation revoked.

In its complaint, Anthropic alleges that the Department of Defense's supply chain risk designation violates the Administrative Procedure Act (APA) and related laws (10 USC 3252). The designation is an arbitrary, evidence-free decision, and an abuse of authority to punish Anthropic for non-compliance in contract negotiations, even though the company is a U.S. company with no ties to a foreign adversary.

Anthropic also alleges violations of the First Amendment, which guarantees free expression, and that the government's actions are a retaliatory act aimed at silencing Anthropic's particular viewpoint on AI safety and stifling democratic debate. Anthropic has asked the court to declare the President's directive, the Department of Defense order, and the risk designation letter unconstitutional and invalid.

by World Economic Forum

Additionally, 40 individuals representing Google and OpenAI employees have filed an amicus brief urging the court to grant Anthropic's motion to protect America's technological superiority and democratic values.

The amicus brief includes Google and Google DeepMind's Chief Scientist Jeff Dean, Research Director Edward Grevenstedt, Product Director Cathy Koreveck, Senior Staff Software Engineer Sanjeev Dhanda, Staff Software Engineer Sean Tartz, Senior Research Engineers Noah Siegel and Zhendong Wang, and Senior Software Engineers Sarah Kogan and Kate Wolverton.

Also signing the letter from OpenAI were security engineer Grant Birkinbine, and technical staff members Anna Louisa Blackman, Aaron Friel, Leo Gao, Manas Joglekar, Jordan Sitkin, Jonathan Ward, Jason Wolff, Jelle Zijlstra, and Cathy Ye.

The legal brief asserts that Anthropic's redlines are legitimate concerns that require strong technical constraints, and concludes that in the absence of a comprehensive legal framework governing AI, developer-imposed technical and contractual constraints are the last line of defense against devastating misuse.

The employees who filed the amicus brief criticized the government's move as unjustified retaliation that will stifle frank discussion about AI safety. They expressed scientific concerns that current AI technology poses the risk of hallucination, and that it is too early to fully automate life-and-death decisions. They also warned that the very creation of a domestic surveillance system using AI would create psychological pressure that would inhibit free speech and political activity among citizens.

While Anthropic's lawsuit is underway, the White House is reportedly working on a presidential order to formally ban federal agencies from using Anthropic's AI tools.

Trump to hit Anthropic with executive order to remove 'woke' AI Claude

https://www.axios.com/2026/03/09/trump-white-house-anthropic-executive-order

Related Posts: