Why is it difficult to build a data center in space?

Elon Musk's SpaceX

Physics of Data Centers in Space | Die wunderbare Welt von Isotopp

https://blog.koehntopp.info/2026/02/25/physics-of-data-centers-in-space/

Computer fans spin because the act of computing physically generates heat, and they become quiet when the processor is idle and there is very little electrical activity. Modern processors use clock gating and power gating techniques to completely shut down large parts of the chip when not needed, minimizing power consumption.

However, when actual work begins, billions of transistors switch, tiny capacitors charge and discharge, and electrons move through resistive paths. This switching energy is converted directly into heat, so the higher the clock speed, the greater the heat generated, resulting in heat that is physically unavoidable.

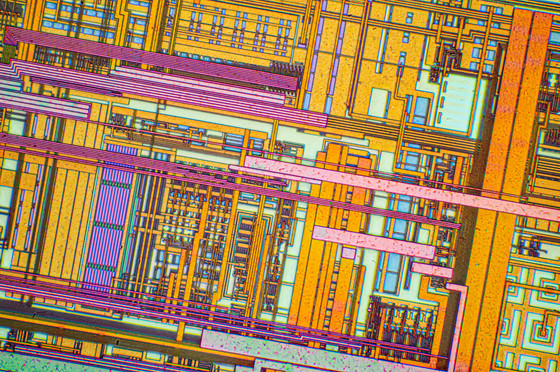

Silicon chips have a tendency to exponentially increase leakage current as they heat up. This current doesn't contribute to calculations, but simply generates heat, further increasing the temperature, creating a vicious cycle. At temperatures around 100°C, leakage current becomes a serious design issue, requiring extra power just to maintain the circuitry. Designers are forced to address this by reducing clock speeds, increasing timing margins, or increasing voltage, resulting in a significant decrease in performance per watt.

by Alexander Klepnev

In addition, operating at high temperatures accelerates the migration of metal atoms inside the chip and the deterioration of the insulating layer, shortening the lifespan of the hardware. The purpose of cooling is to keep the chip within an appropriate temperature range where switching is more dominant than leakage current and high-speed clocks can be maintained.

However, in the vacuum of space, there is no air or liquid to carry away heat, so all heat removal must be by

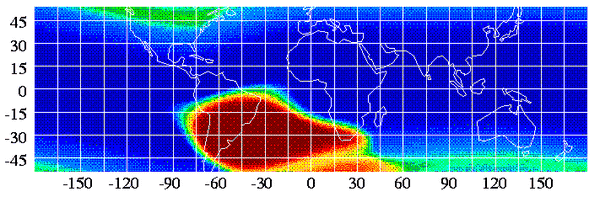

Another problem is the charged particles flying through space called cosmic rays. They are mainly made up of protons and atomic nuclei, and are thought to come from violent astronomical phenomena such as solar activity and supernova explosions, as well as from extremely powerful celestial bodies outside the galaxy. While we on Earth are not usually aware of them, cosmic rays are constantly passing around us, and while they provide clues to exploring violent phenomena in space, they also have an impact on artificial satellites and astronauts.

On Earth, the magnetic field acts as a protective layer, shielding electronics from harmful charged particles, like a magnetic cocoon. Low Earth orbit (LEO) is within this protection, but there are anomalous regions where errors occur frequently, such as the South Atlantic Anomaly (SAA). At higher altitudes, such as geostationary orbit, the magnetic field protection changes, exposing electronics to a harsher particle environment.

Radiation is a problem because modern chips are made up of giant transistors that store tiny electrical charges, and a single particle's impact can disrupt their operation. When a high-energy particle passes through a chip, it can cause a single-event upset (SEU), flipping a 0 in a memory cell to a 1, corrupting data.

Preventing this data corruption requires error-correcting code (ECC) and parity checks, which come at the cost of circuit area, power, and delay. Additionally, disruptive events like latch-up, where particles short out the chip, and total ionizing dose (TID), where charge gradually builds up and changes voltage and timing, can determine the chip's lifetime and mission success.

For this reason, space hardware is protected with shielding such as aluminum, but complete isolation is impossible because the weight of the shielding adds a significant burden during launch. Therefore, radiation-resistant chips

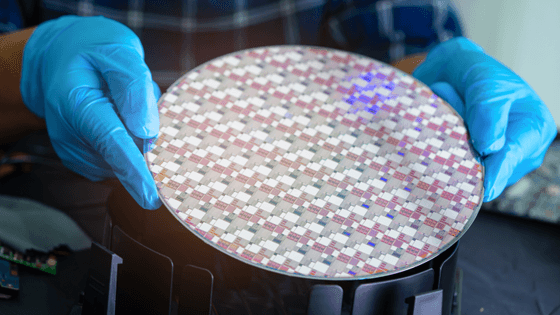

Korntop points out that it's physically impossible to operate a GPU like the NVIDIA H100, which is essential for AI training and inference, in space. The H100's GH100 die uses TSMC's 4N process and contains 80 billion transistors in an area of approximately 814 square millimeters. However, Korntop believes that a chip with such a fine structure would not last more than half a day in space.

by Geekerwan

According to a 2017 document (PDF file) from the European Space Agency (ESA) , the technology hardened for space use is a 65nm process, which is 250 times larger in area than the H100 structure. If this space-hardened process were to achieve the same number of transistors as the H100, the chip would be a huge 0.2 square meter slab, which would increase energy consumption and significantly reduce clock speeds. Even if the relatively new 28nm process were used, it would require an area of 40,000 square millimeters, 50 times larger than the H100, making it virtually impossible to operate a GPU in space, Korntop argued.

Furthermore, when trying to operate a computer in space, the overhead of redundancy is extremely heavy. If a triple redundancy system like TMR is used, a chip with 80 billion transistors would effectively be like a 25 billion GPU fragment. Furthermore, to efficiently dissipate heat in a vacuum, the operating temperature must be increased, but increasing the temperature increases leakage current, worsening power efficiency and further reducing clock speeds. As such, computing resources for space will be fragmented and far inferior to those on Earth.

Even if a data center is installed in space, there are still communication barriers to accessing it from the ground. In addition to limitations on bandwidth, latency, and spectrum, data centers in low orbit move quickly through the sky, limiting communication from a given location to a few minutes every 90 minutes. Continuously tracking satellites and maintaining communication consumes a large amount of communication capacity on the ground, and also creates a disadvantage in terms of latency.

Korntop concluded that, when considering factors such as launch costs and the impact of a spacecraft re-entering the atmosphere at the end of its life, operating a data center in space would be undeniably difficult.

Related Posts: