CEO Sam Altman announces contract review after OpenAI agrees to 'use of AI for mass government surveillance'

by

OpenAI signed a contract with the U.S. Department of Defense (the Department of War) agreeing to the military use of its AI, but did not permit mass surveillance of citizens or the development of autonomous weapons. Meanwhile, Anthropic, OpenAI's predecessor, explicitly prohibited these two activities, leading to the Department of Defense terminating its contract and labeling it a national security risk. Why was OpenAI the only company permitted to use its AI despite the same content? It's believed that the reason is that the OpenAI contract leaves room for interpretation.

How OpenAI caved to the Pentagon on AI surveillance | The Verge

https://www.theverge.com/ai-artificial-intelligence/887309/openai-anthropic-dod-military-pentagon-contract-sam-altman-hegseth

OpenAI says its US defense deal is safer than Anthropic's, but is it? | CIO

https://www.cio.com/article/4139511/openai-says-its-us-defense-deal-is-safer-than-anthropics-but-is-it.html

While Anthropic offered custom models of its AI, 'Claude,' to government agencies, it explicitly prohibited 'mass surveillance of citizens' and 'development of fully autonomous weapons.' The Department of Defense approached Anthropic about relaxing the restrictions, but Anthropic refused, forcing the Department to choose between terminating the relationship or lifting the restrictions. Ultimately, Anthropic persisted , leading the Department of Defense to choose to terminate the relationship and, in addition, to retaliate by designating Anthropic as a 'supply chain risk,' a designation that had never been applied to domestic companies, restricting its use by government agencies.

President Trump claims that Anthropic's left-wing fanatics have attempted to control the U.S. military, and orders the severance of ties. Secretary of Defense Hegseth will designate Anthropic as a 'supply chain risk' - GIGAZINE

Shortly after, OpenAI announced that it had agreed to an AI utilization plan with the Department of Defense. However, while it seemed that OpenAI would not have the same restrictions as Anthropic, it turned out that it had almost the same restrictions as Anthropic, including 'not allowing any use of AI technology for surveillance of the public in the United States,' 'not directly controlling autonomous weapons systems involving the use of lethal force,' and 'not using AI for advanced automated decision-making that significantly affects individual rights, such as social credit systems.' Questions were raised as to why OpenAI was able to enter into this agreement.

OpenAI claims that 'our agreement has more guardrails than any other company, including Anthropic,' but the full text of the contract has not been made public, suggesting there may be room for interpretation that Anthropic does not have.

AI excels at pattern recognition, allowing it to build an accurate portrait of a person by combining seemingly innocuous data, such as location data, web browsing history, financial data, surveillance footage, and voter registration information. Anthropic claims it sought a contract that explicitly prohibited this practice, but OpenAI states that 'in intelligence activities, the handling of personal information complies with the Fourth Amendment to the U.S. Constitution, the National Security Act of 1947, the Foreign Intelligence Surveillance Act of 1978, Executive Order 12333, and related Department of Defense directives for specific foreign intelligence purposes.'

The Verge, a technology media outlet, pointed out that 'these laws are open to interpretation, and since 9/11, intelligence agencies have strengthened their surveillance systems and implemented multiple mass surveillance programs targeting domestic espionage activities, such as the international surveillance network PRISM exposed by former National Security Agency contractor Edward Snowden .' The Verge added, 'The government has broadly interpreted the definition of 'technically legal' in the law, which states that 'mass surveillance can be carried out if it is technically legal,' to justify widespread mass surveillance programs.' The Verge reported that the Department of Defense could justify its surveillance if the contract details OpenAI claims are correct.

In the midst of this uproar, OpenAI CEO Sam Altman accepted questions publicly on X and promised to revise the contract based on several points raised. The full translation of Altman's statement is below.

We have been working to make some additions to our contract with the DoW (Department of War) to make the principles very clear.

1. We amend the contract to add the following language to the existing content:

'In accordance with applicable law, including the Fourth Amendment to the United States Constitution, the National Security Act of 1947, and the FISA Act of 1978, this AI system may not be used to intentionally conduct domestic surveillance of U.S. citizens or nationals. For the avoidance of doubt, the Department of Defense understands this restriction to prohibit the intentional tracking, surveillance, or monitoring of U.S. citizens or nationals, including the procurement or use of commercially obtained personal or identifiable information.'

Protecting the civil liberties of Americans is extremely important. We wanted to be particularly clear about this issue with regard to commercially obtained information because it has been a major concern. As with everything we do in our iterative evolution, we will continue to learn and improve.

I think this is an important change, and our team and the DoW team have done a really good job on this.

2. The Department has also confirmed that our services will not be used by Department of Defense intelligence agencies (e.g., the National Security Agency). Providing services to these agencies would require additional contract amendments.

3. To be very clear, we want to work through the democratic process. Important decisions about society should be made by government. We want to share our expertise, speak out in defense of the principles of freedom, and have a seat at the table. However, we are very clear about how the system works (as many of you have asked, if I received an order that I believed was unconstitutional, I would rather go to jail than obey it). However,

4. There are many areas where the technology is still incomplete and where the necessary trade-offs for safety are not fully understood. We will work carefully with DoW, using technical safeguards and other methods.

5. There is one thing I think I did wrong. I should not have rushed to announce this on Friday. The issues are very complex and require clear communication. We were genuinely trying to prevent the situation from escalating and avoid a worse outcome, but I think in the end it came across as opportunistic and sloppy. This has been a good learning experience for me as I face more significant decision-making in the future.

During our conversation over the weekend, I reiterated that I believe Anthropic should not be designated a supply chain risk and that I would like the DoW to offer the company similar terms to those agreed to with us.

We will be holding a company-wide meeting tomorrow morning to answer further questions.

Here is re-post of an internal post:

— Sam Altman (@sama) March 3, 2026

We have been working with the DoW to make some additions in our agreement to make our principles very clear.

1. We are going to amend our deal to add this language, in addition to everything else:

'• Consistent with applicable laws,…

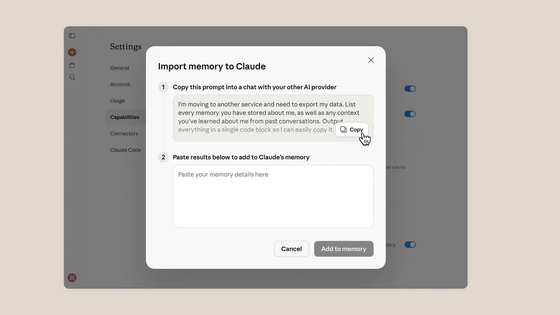

Perhaps Anthropic's adherence to its policy has been well-received by users, as Claude hasrisen to the top of the App Store rankings, while a campaign to cancel OpenAI's ChatGPT has erupted . Taking advantage of this, Anthropic launched an AI migration campaign, made paid features free, and released a data migration tool.

Anthropic opens Claude's memory function to free users to acquire AI switching users & adds memory import function - GIGAZINE

Related Posts: