Anthropic releases 'AI Fluency Index,' an index examining 'Are humans using AI effectively?'

Humans are incorporating AI into their lives, but not everyone is using it effectively. A survey conducted by Anthropic, the developer of the AI tool 'Claude,' revealed that people use AI in different ways.

Anthropic Education Report: The AI Fluency Index \ Anthropic

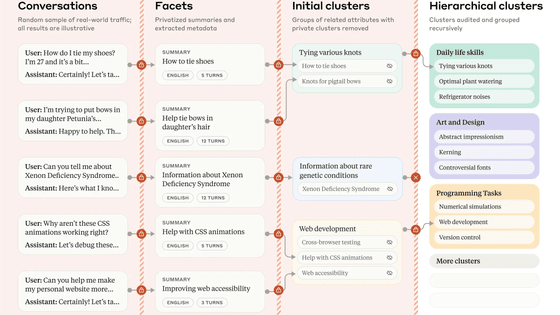

Some AI users simply trust the answers output by the AI, while others try to improve their answers by reinstructing the AI or verifying information through different routes. Anthropic created the concept of the 'AI Fluency Index' as an index to verify the flexibility of human behavior toward such AI, or 'fluency,' and conducted a survey to track user behavior.

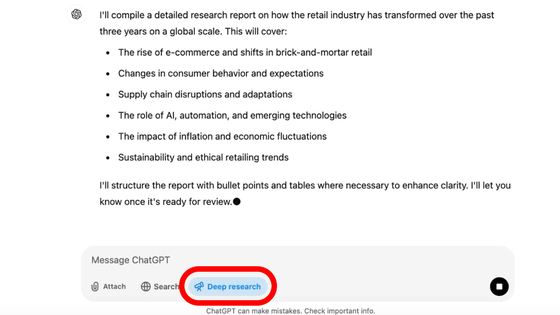

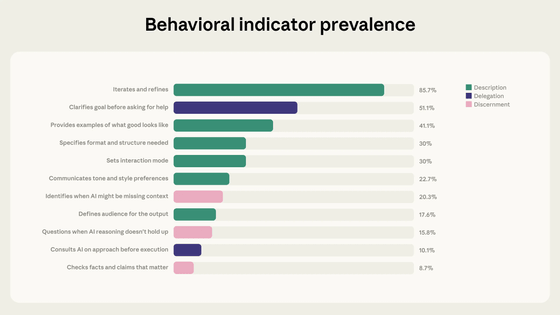

Anthropic listed 24 'fluency' behaviors that humans are likely to take when using AI. They then analyzed 9,830 conversations that took place on Anthropic's website, Claude.ai, over a seven-day period in January 2026. Thirteen of the 24 behaviors were outside of Claude.ai and could not be tracked, so the conversations were divided into the remaining 11 categories to determine which behaviors were most prevalent.

The results are as follows: Green is defined as the theme of 'Explanation,' purple as 'Delegation,' and pink as 'Judgment.'

Iterative improvement: 85.7%

Clarify your goals before asking for help 51.1%

Show examples of good condition 41.1%

Specify the required format and structure 30%

Set interactive mode to 30%

Expressing preference for tone and style 22.7%

Identify potential lack of context in AI 20.3%

Define the target audience for the deliverables 17.6%

15.8% question AI reasoning when it is not valid

Consult AI about approach before execution: 10.1%

Verifying important facts and assertions 8.7%

One of the most striking trends in the data is the association of iteration and refinement with all other AI fluency behaviors. 85.7% of the conversations in our sample involved 'iteration and refinement,' where the user refines their work based on previous interactions, rather than simply accepting the initial answer and moving on to a new task. These conversations also saw significantly higher rates of other fluency behaviors.

On average, conversations that included repetition and refinement resulted in an additional 2.67 fluency behaviors, nearly double the average of 1.33 in conversations that did not. This was particularly evident in behaviors evaluating Claude's output: in conversations with repetition and refinement, users were 5.6 times more likely to doubt Claude's reasoning and 4 times more likely to identify missing context.

In 12.3% of the conversations in our sample, some conversations produced artifacts such as code, documentation, or interactive tools. These conversations showed a significant increase in behaviors categorized under the themes of 'explain' and 'delegate.' For example, compared to conversations that did not produce artifacts, there was an increase in behaviors that clarified goals, specified format, provided examples, and iterated. In other words, they were providing more specific direction to the AI early in the work.

However, this increased directiveness does not equate to increased evaluation or discrimination. On the contrary, the conversations in which the artifacts are produced are less likely to identify missing context, to fact-check, or to explain the model's inferences.

Regarding this, Anthropic said, 'It's possible that because Claude is producing a complete, functional-looking artifact, it doesn't feel necessary to raise any additional questions. If it looks complete, it may be treated as a finished product. Or, users may be doing some kind of validation outside of Claude.ai, such as running and testing the code themselves or sharing it with colleagues.'

Anthropic announced that it will establish these items as a baseline indicator of the state of AI collaboration, the 'AI Fluency Index,' and use it as a foundation for tracking the evolution of human behavior as AI models change in the future.

Related Posts:

in AI, Posted by log1p_kr