Google's call classification AI 'Perch 2.0' is helping to study whale ecology

Perch 2.0, a bioacoustic model developed by Google DeepMind, was pre-trained using primarily the calls of terrestrial organisms such as birds, but it has also been shown to perform extremely well in classifying marine mammals. The research results have been published on arXiv, a repository of unpeer-reviewed research papers.

How AI trained on birds is surfacing underwater mysteries

[2512.03219] Perch 2.0 transfers 'whale' to underwater tasks

https://arxiv.org/abs/2512.03219

Perch 2.0 is a base model trained on data from over 14,500 species, but the training data contains very little underwater acoustics. However, by performing transfer learning using a small amount of labeled data, it has been shown to outperform existing models in classifying marine mammals.

Google releases enhanced version of AI 'Perch' that can estimate 'the type and number of living things in the vicinity' from environmental sounds - GIGAZINE

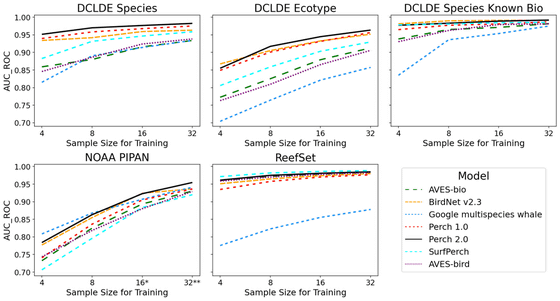

In this experiment, we verified the ability of Perch 2.0, a foundational model trained on terrestrial animal sounds, to transfer learn to marine mammal and underwater acoustic classification tasks. Specifically, we performed linear probing using embeddings generated from the model and compared it with other existing pre-trained bioacoustic models, including Perch 1.0 , SurfPerch , Google Multispecies Whale Model (GMWM), BirdNet V2.3 , AVES-bio , and BirdAVES .

The experiment consisted of three main datasets. The first was ' NOAA PIPAN ,' a 30-second recording of baleen whale calls, including minke, humpback, sei, blue, fin, and Bryde's whales, as well as anthropogenic noise. The second was ' ReefSet ,' a dataset containing biological sounds from coral reefs, as well as the sounds of specific fish, dolphins, and waves. The third was ' DCLDE 2026 ,' which tasked the team with distinguishing between orcas, humpback whales, and non-biological sounds from the northeastern Pacific.

Experimental results show that Perch 2.0 consistently performs well across most marine tasks, outperforming other comparison models in many cases. In particular, the Perch 2.0 embedding demonstrates clearer discrimination than other models when it comes to orca subspecies identification.

Google cites the high generalization ability that comes from large-scale models and vast amounts of training data as the reason why models trained on birds are so well adapted to underwater tasks. From the perspective of neural scaling laws, models trained on large datasets with many classes tend to maintain high performance in areas outside their training domain.

It is also explained that the difficulty of bird species classification is a crucial factor in improving the model's accuracy. While bird calls vary little from species to species, the model must accurately distinguish between a huge number of classes—over 14,500 species—so during the training process, it develops a strong ability to capture subtle acoustic features. This honed feature extraction ability can then be applied to identifying underwater whale calls and killer whale ecotypes.

In fact, a tutorial on whale classification using NOAA archive data has been released, and it is expected that agile modeling will greatly improve the efficiency of marine ecosystem monitoring and conservation efforts. Google stated that this technology is becoming an essential tool for quickly responding to the ever-changing sounds of whales and the discovery of new sounds.

Related Posts: