Apple sued in West Virginia for failing to detect and take measures against child sexual abuse records on iCloud

The state of West Virginia has filed a lawsuit against Apple, alleging that the company failed to take action against iCloud being used to store and distribute child sexual abuse material (CSAM).

West Virginia Attorney General Sues Apple for Role in Distribution of Child Sexual Abuse Material | Office of the WV Attorney General John B. McCuskey

'Protecting the privacy of child predators is completely unacceptable and violates West Virginia law,' West Virginia Attorney General JB McCaskey said in a statement. 'Apple has refused to do the moral thing to police itself. That's why I'm suing to require them to follow the law and report CSAM so children can't be harmed again by storing and sharing it.'

Federal law requires US-based companies to report detected CSAM to the National Center for Missing and Exploited Children (NCMEC). Meta reported over 30.6 million cases in 2023, Google reported 1.47 million, while Apple reported only 267. For this reason, McCaskey points out, 'Apple's failure to deploy detection technology is not a passive oversight, but a choice.'

The West Virginia Attorney General's Office is seeking compensatory and punitive damages from Apple, an injunction requiring the implementation of effective CSAM detection measures, and equitable remedies requiring the design of more secure products going forward.

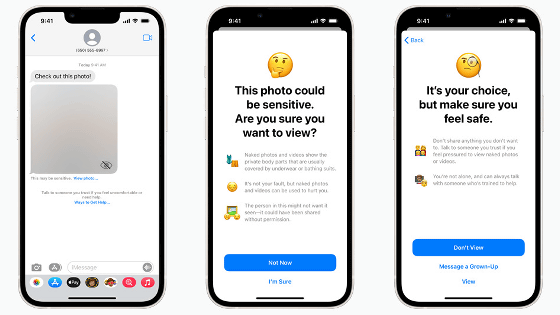

By the way, in 2021, Apple announced a policy to 'scan the contents of messaging apps and iCloud to prevent child sexual exploitation.'

Apple announces that it will scan iPhone photos and messages to prevent child sexual exploitation, sparking outcry from the Electronic Frontier Foundation and others, claiming that the move will undermine user security and privacy - GIGAZINE

However, human rights groups strongly opposed the plan, saying it would 'put children at risk.'

The feature implementation was reportedly

Apple scraps implementation of 'system to check iCloud Photos for child sexual abuse images' - GIGAZINE

Apple has since expressed opposition to the proposed UK Online Safety Bill, which would require companies to scan messages in messaging apps.

If we trace the events that followed, it appears that 'Apple was trying to take measures but was unable to do so due to external pressure.'

The West Virginia Attorney General's Office said Apple internally described iCloud as 'the largest platform for distributing child pornography.'

Related Posts:

in Note, Posted by logc_nt