YouTube removes video explaining how to install Windows 11 on unsupported machines due to 'risk of physical harm,' highlighting the limitations of AI moderation

Support for Windows 10 will

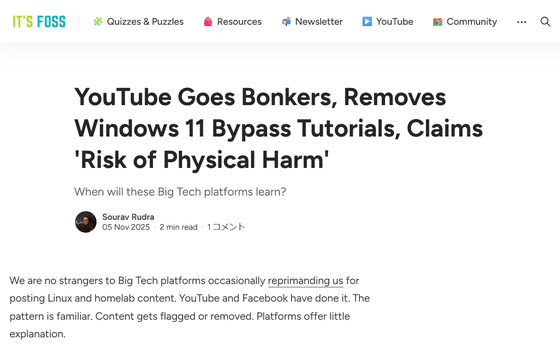

YouTube Goes Bonkers, Removes Windows 11 Bypass Tutorials, Claims 'Risk of Physical Harm'

https://itsfoss.com/news/youtube-removes-windows-11-bypass-tutorials/

CyberCPU Tech , a technology YouTuber with over 300,000 subscribers, uploaded a video on October 20, 2025, titled ' A surefire way to create a local account in 25H2 .' The video explains how to install and configure 25H2, the annual update for Windows 11 released in September 2025, bypassing the official requirements of a Microsoft account and hardware conditions such as Secure Boot.

Foolproof Way To Create A Local Account In 25H2 - YouTube

According to Rich of CyberCPU Tech, this video was removed after being flagged as violating YouTube's Community Guidelines. He also uploaded a video titled 'Unlock Windows 11 25H2 on Unsupported Hardware' on October 20, 2025, which also received a warning for violating YouTube's Community Guidelines.

When YouTube issues a warning for violating its Community Guidelines, it will state which section of the Community Guidelines it violated. In the case of CyberCPU Tech's two videos, Rich said it was flagged as 'promoting dangerous or illegal activity that poses a risk of serious bodily harm or death.' Rich immediately appealed, but his first appeal was rejected in 45 minutes, and his second appeal in just five minutes, which made him feel like the decision was made automatically by AI, not by a human.

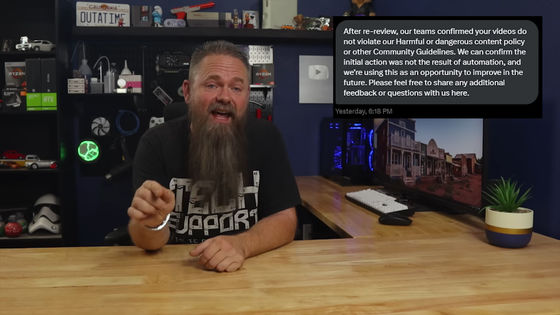

About two weeks later, on November 3, 2025, Rich reported that his appeal had been accepted and the video that had been removed for violating the Community Guidelines had been restored. According to Rich, he received a message from YouTube stating, 'After a review, we have determined that your video does not violate our policy regarding harmful or dangerous content or other guidelines.' Rich suspected that YouTube's AI moderation had made a mistake or error, but YouTube maintained that neither the initial removal nor the dismissal of his appeal were automated.

Similar cases have been reported on other channels. Enderman , a YouTube channel that posts videos related to Windows experiments and malware, posted a video titled 'My Channel is Being Terminated' on November 4, 2025. According to the video, a subchannel linked to Enderman's channel was linked to 'Coffin Star Rail Play' (the channel has since been deleted), which is believed to have been distributing leaked information about the game ' Disintegration: Star Rail ,' and had received repeated copyright infringement strikes. YouTube suspends an account after three strikes, a process known as the 'three-strike system.' Despite repeatedly denying its connection to unrelated channels, Enderman's subchannel continued to receive strikes and was ultimately banned.

My channel is getting terminated - YouTube

In a video on the Enderman channel, he cited 'AI' as the reason these problems occur. YouTube relies on AI to moderate a huge number of videos, and if a video contains copyright-infringing music, violent language, or sexual imagery, the AI automatically detects the video, sends a warning to the channel, and suspends monetization or publication of the video. Enderman said, 'I didn't realize that drastic measures like channel termination could be handled by AI alone. None of us are powerless against AI systems.'

It's FOSS, the media outlet that reported on this incident, criticized the incident, saying, 'YouTube's tyranny has been exposed.' It's FOSS pointed out that a human reviewer would clearly determine that CyberCPU Tech's technical videos do not pose a risk to life, and that the technical channel Enderman is not linked to any gaming leak channels. However, YouTube's reliance on AI moderation, even down to the point of terminating channels, highlights how difficult it is to distinguish between legitimate content and harmful content.

On the other hand, there are many cases where spam videos and messages that should actually be blocked are not deleted by AI and reach viewers. It's FOSS states, 'What these platforms need is human oversight. Automation can help, but it cannot replace human judgment in complex cases.'

Related Posts:

in AI, Video, Web Service, Posted by log1e_dh