The chief scientific officer of AI development platform Hugging Face is concerned that a true breakthrough in AI won't come if things continue as they are.

Dario Amodei of AI company Anthropic predicted that advances in biology and medicine that would take human scholars 50 to 100 years to achieve could be compressed into 5 to 10 years with the use of AI. Amodei described this as a 'compressed 21st century,' but Thomas Wolf, chief scientific officer of AI development platform Hugging Face, expressed concern that if AI development continues as it is, we will end up with nothing more than a 'yes man on a server,' and that a 'compressed 21st century' will not be brought about.

I shared a controversial take the other day at an event and I decided to write it down in a longer format: I'm afraid AI won't give us a 'compressed 21st century'.

— Thomas Wolf (@Thom_Wolf) March 6, 2025

The 'compressed 21st century' comes from Dario's 'Machine of Loving Grace' and if you haven't read it, you probably…

Hugging Face's chief science officer worries AI is becoming 'yes-men on servers' | TechCrunch

https://techcrunch.com/2025/03/06/hugging-faces-chief-science-officer-worries-ai-is-becoming-yes-men-on-servers/

In October 2024, Amodei published an essay titled ' Machines of Loving Grace ,' in which he predicts that 'in the next few years, a country will emerge where Einsteins sit in data centers, and all scientific discoveries of the 21st century will be compressed into a span of just five to ten years, in other words, a 'compressed 21st century.''

When Wolf first read the essay, he thought, 'Yes, AI may change science in five years,' but when he read it again five days later, he thought, 'Most of it is wishful thinking.' Based on his own experience, he said that if AI development continues as it is, the person in the data center will not be Einstein but just a yes man.

The basis of his ideas comes from Wolf's own struggles. Wolf, who grew up in a small village and was a top student who could even predict what questions would come up in school exams, says that it wasn't until he enrolled in a doctoral program at MIT, that is, became a researcher, that he realized he was just an average, ordinary researcher. Specifically, he says that he couldn't create anything 'that wasn't in the books' unless it was a transformation of an existing theory, and that it was difficult for him to challenge the status quo or question what he had learned. 'I got good grades, and I was no Einstein,' Wolf recalls.

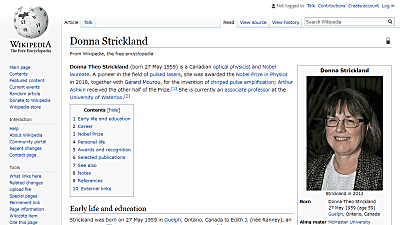

In contrast, Einstein failed the entrance exam to the Zurich Polytechnic Institute because he did not get enough points overall. Also, Edison, known as the king of inventors, was described by his teacher as a 'messy-headed' child in elementary school, and there is an episode where he dropped out after only three months. Also, Barbara McClintock, a cytogeneticist, was described as having a 'strange way of thinking,' but later won the Nobel Prize in Physiology or Medicine.

Based on these examples, Wolf said, 'It is a mistake to think that if talented students continue to develop in an unconventional way, they will turn out to be geniuses like Einstein or Newton.' He went on to say that what is needed for true breakthroughs is 'asking the right questions and challenging even what we have learned.'

For example, Copernicus' heliocentric theory (sun-centered theory) contradicted the geocentric theory (centered theory) that the sun moves around the earth, which was the common knowledge at the time. In machine learning, this would be an assertion that contradicts the training dataset.

In current AI development, tests with grand names such as 'Humanity's Last Test' and 'Frontier Mathematics' are being conducted as a benchmark to see if intelligence has improved. The content consists of difficult questions packed with specialized knowledge from a wide range of fields, but a clear final answer is provided, and OpenAI's 'Deep research' recorded an unprecedented score of '26.6%' in February 2025.

In the 'final test of humanity,' where the highest answer accuracy was about 9%, OpenAI's Deep research recorded more than 26% - GIGAZINE

However, Wolf said that these problems were 'exactly what I was good at,' and suggested that what is needed to find Einstein is not 'a system that knows all the answers,' but 'a system that can ask questions that no one has thought of or thought to ask.'

Wolf explained that the reason why AI, which should already have 'all the knowledge of humanity,' is not leading to breakthroughs is because it is 'only filling in the gaps of what humanity already knows.' If we are aiming for scientific breakthroughs, we should move to benchmarks that test whether we can do the following:

- Challenge your own knowledge of training data

Take a bold approach that contradicts the facts

- Make general suggestions based on small hints

Ask non-obvious questions that can lead to new research avenues

Related Posts: